Clustering algorithms help us make sense of messy data. They group similar things together. No labels. No hints. Just patterns hiding in plain sight. But which clustering algorithm works best? The answer is both simple and tricky. It depends on your data and your goal.

TLDR: There is no single “best” clustering algorithm. K-Means is fast and simple but struggles with complex shapes. Hierarchical clustering is great for exploring structure but can be slow. DBSCAN shines with irregular shapes and noise. Choose based on your data size, shape, and need for flexibility.

Let’s break it down. Slowly. Clearly. And with a bit of fun.

What Is Clustering Anyway?

Clustering is a type of unsupervised learning. That means there are no predefined labels. The algorithm looks at the data and decides how to group it.

Imagine you spill a box of colored marbles on the floor. You have no instructions. You just sort them by what looks similar. That’s clustering.

Clustering is used in many areas:

- Customer segmentation in marketing

- Anomaly detection in fraud prevention

- Image segmentation in computer vision

- Document grouping in search engines

Now let’s compare the stars of the show.

1. K-Means: The Popular Kid

K-Means is simple. Fast. And widely used.

Here’s how it works:

- Choose the number of clusters, K.

- Randomly place K centroids.

- Assign each data point to the nearest centroid.

- Move centroids to the center of their assigned points.

- Repeat until stable.

That’s it.

Why People Love It

- Easy to understand

- Fast on large datasets

- Works well with clear, round clusters

Where It Struggles

- You must choose K in advance

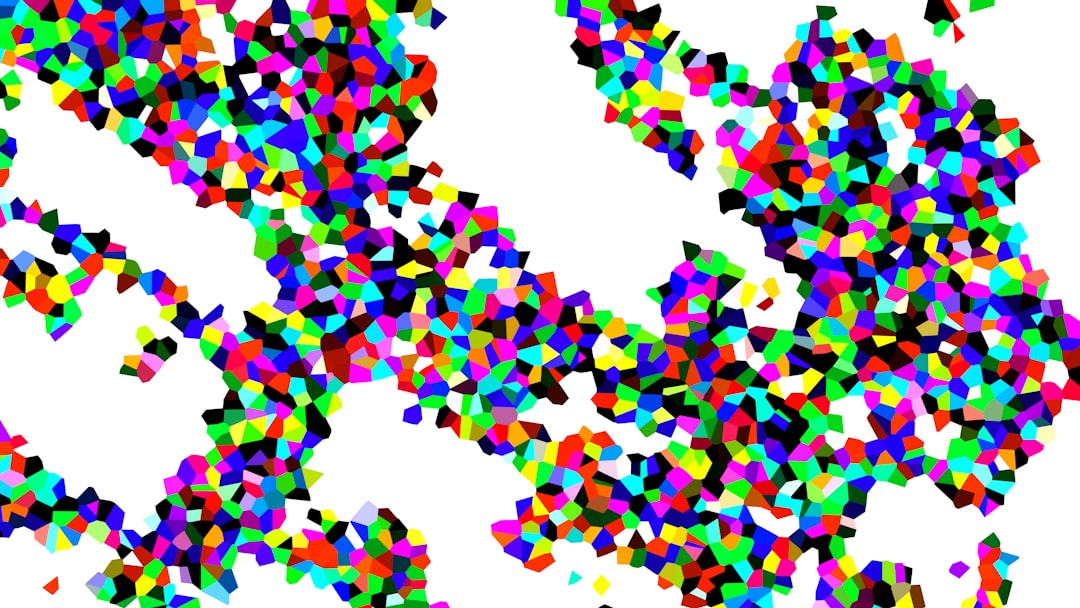

- Struggles with irregular shapes

- Sensitive to outliers

- Different runs can give different results

K-Means works best when your clusters are round and well separated. Think neat little bubbles.

If your data looks like spaghetti? Not so much.

2. Hierarchical Clustering: The Organizer

Hierarchical clustering builds a tree of clusters. It does not require you to choose the number of clusters right away.

There are two types:

- Agglomerative – start with individual points, merge them

- Divisive – start with all points, split them

Agglomerative is more common.

The result is a dendrogram. That’s a fancy word for a tree diagram.

Why People Love It

- No need to pick K at the start

- Easy to visualize cluster structure

- Works well for small datasets

Where It Struggles

- Slow for large datasets

- Computationally expensive

- Merges cannot be undone

Hierarchical clustering is great when you want insight. It helps you explore relationships. It tells a story about how data points connect.

But it is not built for massive datasets with millions of points.

3. DBSCAN: The Shape Detective

DBSCAN stands for Density-Based Spatial Clustering of Applications with Noise. Yes, that’s a mouthful.

But its idea is simple.

It groups points that are closely packed together. It marks lonely points as noise.

Instead of picking K, you choose:

- Epsilon (ε) – how close points must be

- MinPts – minimum points to form a cluster

Why People Love It

- Finds clusters of any shape

- Handles noise well

- No need to specify number of clusters

Where It Struggles

- Hard to choose good parameters

- Poor performance when densities vary

- Less effective in high-dimensional data

DBSCAN is amazing with weird shapes. Think curves. Spirals. Blobs within blobs.

If your dataset contains outliers, DBSCAN will not panic. It simply labels them as noise. Calm and collected.

4. Mean Shift: The Smooth Operator

Mean Shift is another density-based algorithm. But it works differently from DBSCAN.

It shifts data points toward the densest area nearby. It keeps moving points until they land at a peak. These peaks form clusters.

Pros

- No need to pick number of clusters

- Can find arbitrarily shaped clusters

Cons

- Computationally expensive

- Needs bandwidth parameter

- Slow on large datasets

Mean Shift is elegant. But not always practical for big data.

5. Gaussian Mixture Models: The Soft Thinker

K-Means assigns points to one cluster only. Hard assignment.

Gaussian Mixture Models (GMM) are different. They use soft assignment. Each point has a probability of belonging to each cluster.

GMM assumes data comes from a mix of Gaussian distributions. It uses the Expectation-Maximization algorithm.

Pros

- Flexible cluster shapes

- Probabilistic output

- More expressive than K-Means

Cons

- More complex

- Can overfit

- Still need to choose number of components

If K-Means draws circles, GMM draws ellipses. That extra flexibility can make a big difference.

How Do You Decide?

Here’s the honest answer.

It depends.

Ask yourself these questions:

- How big is the dataset?

- Are clusters round or irregular?

- Is there noise?

- Do I know how many clusters exist?

- Is speed important?

Let’s simplify this with quick advice.

Choose K-Means If:

- You have large data

- Clusters are compact and round

- You need speed

Choose Hierarchical If:

- Dataset is small or medium

- You want a visual tree structure

- You are exploring relationships

Choose DBSCAN If:

- Clusters are irregular

- You have noise and outliers

- You do not know the number of clusters

Choose GMM If:

- Clusters overlap

- You want probabilities

- Elliptical shapes fit better

What About High-Dimensional Data?

Clustering becomes harder in high dimensions. This is called the curse of dimensionality.

Distances become less meaningful. Everything starts to look equally far apart.

In such cases:

- Consider dimensionality reduction first

- Use techniques like PCA or t-SNE

- Then apply clustering

Preprocessing often matters more than algorithm choice.

Evaluation: How Do You Know It Worked?

Good question.

Clustering has no labels. That makes evaluation tricky.

You can use:

- Silhouette score

- Davies-Bouldin index

- Calinski-Harabasz index

Higher silhouette score usually means better separation.

But numbers are not everything. Visualization helps a lot. Always plot your clusters if possible.

Real-World Tip: Try More Than One

Data science is not a guessing game. It is an experiment.

Run K-Means. Try DBSCAN. Compare results.

You may discover surprising patterns. One algorithm may split a cluster. Another may merge two together.

No method sees reality perfectly. Each sees it differently.

So… Which Works Best?

Here’s the big truth.

There is no universal winner.

K-Means is fast and reliable for clean, simple data. Hierarchical clustering is fantastic for structure and insight. DBSCAN wins for messy, irregular data with noise. GMM offers flexible and probabilistic clustering.

The best algorithm is the one that matches your data’s personality.

Yes. Data has personality.

Some datasets are neat and organized. Others are chaotic and dramatic. Choose the algorithm that understands them.

Final Thoughts

Clustering is both art and science. Math guides it. But intuition shapes it.

Start simple. Visualize often. Compare results. Tune parameters carefully.

And remember. The goal is not just to group data. The goal is to uncover hidden structure. To reveal patterns. To tell a story.

When you pick the right clustering algorithm, that story becomes clear.

And that’s when the magic happens.